The Quality Imperative

📑 Table of Contents

- 1. Executive Summary

- 2. Introduction: The High-Stakes World of Programmatic Ad Operations

- 3. The Pre-Intervention Landscape: A Case Study in Systemic Fragility

- 4. The Strategic Blueprint: A Four-Pillar Transformation Framework

- 5. The Knowledge Nexus: Creating a Dynamic Learning Repository

- 6. Measuring Success: Quantitative and Qualitative Results

- 7. Analysis: Key Success Factors and Replicable Principles

- 8. Conclusion: From Firefighting to Future-Proofing — The New Quality-Ops Model

- 9. Appendix: Implementation Toolkit

1. Executive Summary

In the high-velocity, high-stakes environment of programmatic advertising, campaign quality is not a luxury—it is the bedrock of client trust, campaign performance, and operational efficiency. Yet, many Ad Operations teams operate in a perpetual state of firefighting, plagued by high error rates and missed deadlines. This white paper details a comprehensive quality transformation within a DSP trafficking team that reduced its error rate from 25% to <1% and improved SLA adherence from 75% to 97% within just 2-3 months.

The transformation was not achieved through quick fixes but through the implementation of a robust, four-pillar framework:

(1) A standardized QA process,

(2) A centralized ticketing system,

(3) A dedicated QA team

(4) A disciplined Root Cause Analysis (RCA) methodology.

This framework, supported by a dynamic knowledge base, turned sporadic quality checks into a continuous improvement engine. This paper provides a replicable blueprint for any AdOps leader seeking to eliminate errors, build client confidence, and create a scalable, sustainable model for quality assurance in a digital-first world.

2. Introduction: The High-Stakes World of Programmatic Ad Operations

Programmatic advertising is a complex symphony of technology, data, and human expertise. Ad Operations teams are the conductors, responsible for launching and managing campaigns that must be flawless: correctly targeted, accurately budgeted, and live on time. A single error—a misplaced decimal in a budget, an incorrect targeting parameter—can waste thousands of dollars in ad spend and irreparably damage client relationships. In this context, quality assurance is the most critical, yet often most undervalued, function. Without a proactive, structured approach to QA, teams are doomed to a reactive cycle of errors, rework, and escalations.

3. The Pre-Intervention Landscape: A Case Study in Systemic Fragility

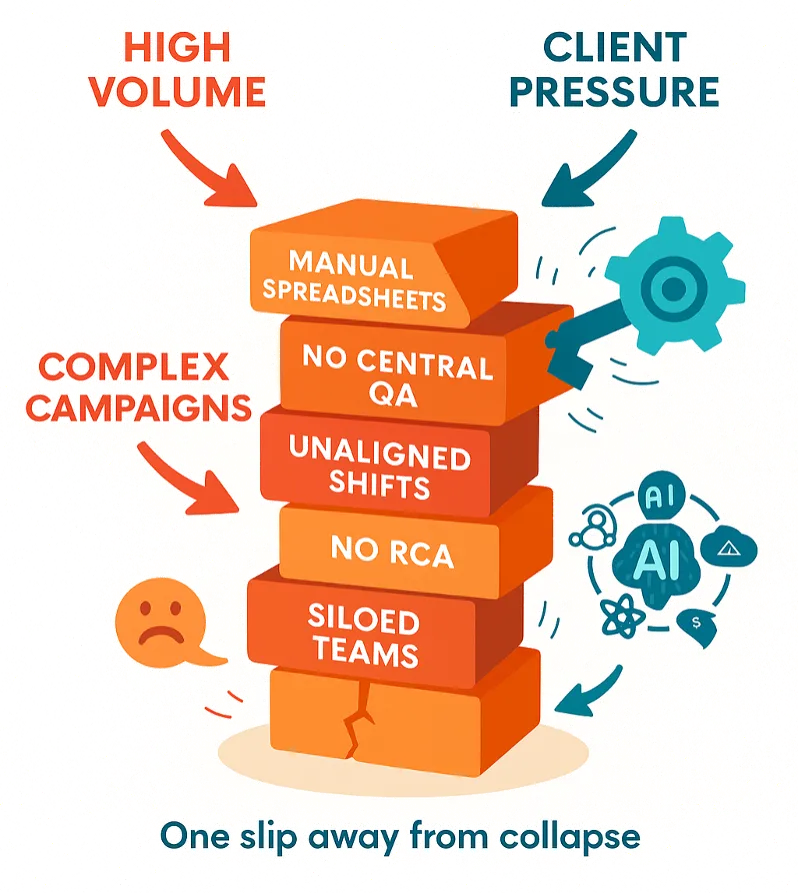

The challenges faced by the DSP team are endemic across the industry.

3.1. The Cost of Error: Beyond the 25% Metric

A 25% error rate is not just a number; it has tangible downstream costs:

- Financial Cost: Wasted ad spend and manual effort spent on rework.

- Reputational Cost: Erosion of client trust and brand credibility.

- Human Cost: Team morale suffers under the constant pressure of fixes and escalations.

3.2. Diagnosing the Core Dysfunctions

Analysis revealed the root causes were systemic, not individual:

- Fragmented QA: No standardized process; quality was everyone's responsibility and therefore no one's.

- Manual Tracking: Reliance on spreadsheets led to missed requests, duplication, and zero visibility into workload or progress.

- Misaligned Resources: Staffing schedules were based on convenience, not campaign inflow data, creating bottlenecks during peak times.

- No Learning Loop: Errors were corrected individually but never analyzed collectively to prevent recurrence.

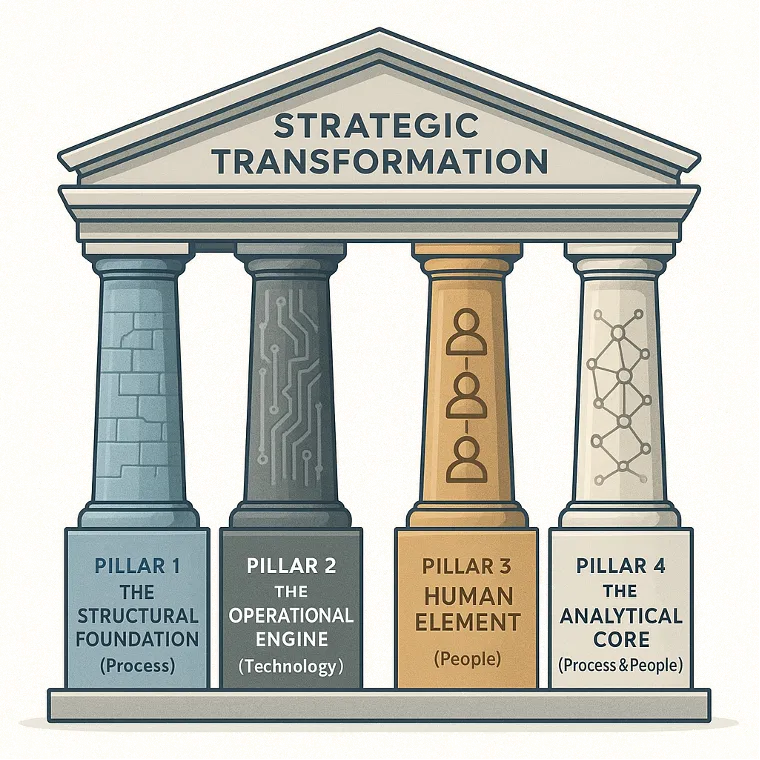

4. The Strategic Blueprint: A Four-Pillar Transformation Framework

The solution was a holistic approach that addressed people, process, and technology simultaneously.

- Pillar 1: The Structural Foundation (Process)

- Pillar 2: The Operational Engine (Technology)

- Pillar 3: The Human Element (People)

- Pillar 4: The Analytical Core (Process & People)

4.1 Pillar 1: The Structural Foundation -- Designing a Centralized QA Framework

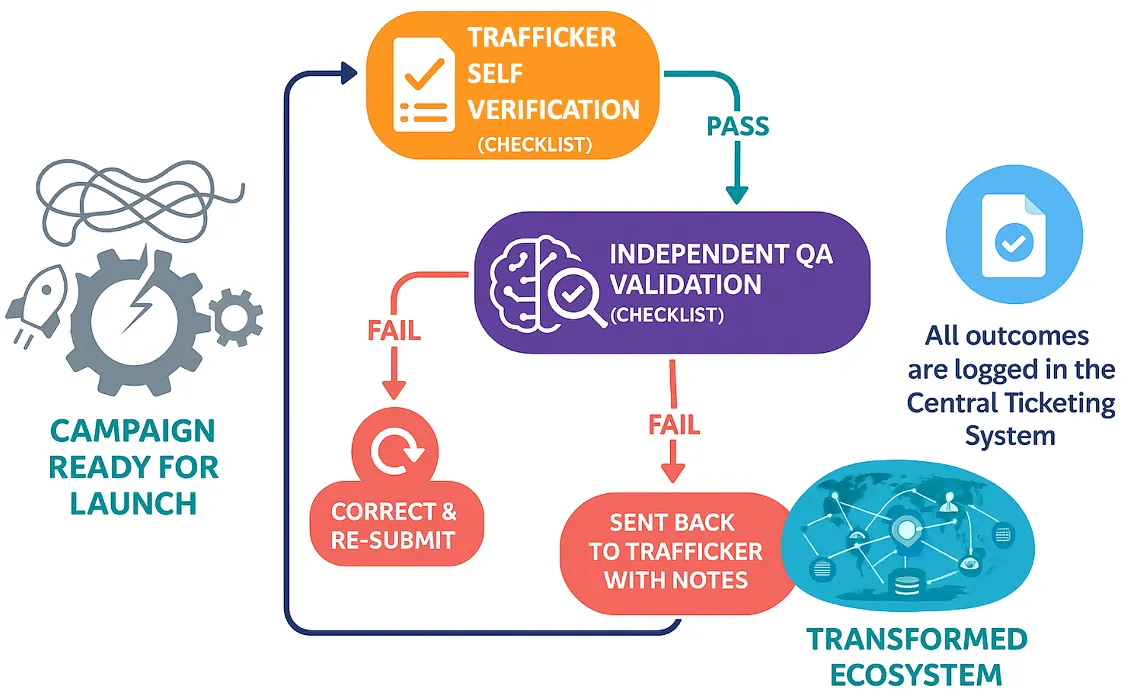

The first step was to replace ad-hoc checking with a rigorous, standardized process.

- QA Checklists: Developed comprehensive checklists tailored to different campaign types, ensuring every critical element (budget, dates, targeting, creatives) was verified.

4.1.1 The Two-Stage Process:

- Self-Verification: The trafficker is the first line of defense, required to complete the checklist before submitting a campaign for launch.

- Independent QA Validation: A dedicated QA specialist reviews the campaign against the same checklist, providing an objective second layer of defense.

4.2 Pillar 2: The Operational Engine -- Implementing a Centralized Ticketing System

A centralized ticketing system (e.g., Jira, Asana, ServiceNow) replaced scattered spreadsheets and emails.

Benefits:

- Universal Visibility: Every stakeholder could see the status of every request.

- Automated Prioritization & SLA Tracking: The system automatically flagged at-risk tickets.

- Audit Trail: A complete history of every action, decision, and error, invaluable for training and compliance.

- Workload Management: Managers could see team capacity and redistribute work in real-time.

4.3 Pillar 3: The Human Element -- Establishing a Dedicated QA Function

Quality requires dedicated focus. A specialized QA team was established.

- Role: This team's sole purpose was to perform the independent validation, free from the pressure of trafficking quotas.

- Expertise: QA members received advanced training on platform intricacies and common error patterns.

- Feedback Mechanism: QA findings were not punitive; they were the primary input for training, SOP updates, and knowledge sharing, closing the loop between error detection and prevention.

4.4 Pillar 4: The Analytical Core -- Instituting Root Cause Analysis (RCA)

The most crucial pillar was building a culture of continuous learning from mistakes.

The Four Error Categories:

- Human Error: Fatigue, oversight. Solution: Process redesign, shift alignment.

- Knowledge Error: Lack of training. Solution: Targeted retraining.

- System Error: Tool failure. Solution: IT ticket, tool enhancement.

- Process Error: Flawed SOP. Solution: Process redesign.

The RCA Methodology: A monthly meeting was held to review all errors. Each was tagged with a category, and a standardized template was used to document the root cause and the preventive action plan.

5. The Knowledge Nexus: Creating a Dynamic Learning Repository

The insights from RCA and QA were codified into a living knowledge base.

- Content: Step-by-step guides, FAQs, screenshots, and a "Hall of Fame" for common errors.

- Impact: This became the first point of call for traffickers, reducing dependency on subject matter experts and accelerating the onboarding of new hires.

- Maintenance: The knowledge base was updated monthly with new learnings, ensuring it never became obsolete.

6. Measuring Success: Quantitative and Qualitative Results

The impact of the four-pillar framework was dramatic and rapid.

7. Analysis: Key Success Factors and Replicable Principles

- Leadership Commitment: Quality was treated as a strategic priority, not a tactical afterthought.

- Systemic Thinking: The solution addressed the entire system (process, tech, people), not just symptoms.

- Data-Driven Discipline: The RCA process provided objective data to drive investment in training and process change.

- Blame-Free Culture: Errors were framed as opportunities for system improvement, not individual failure.

- Continuous Investment: The knowledge base and QA team required ongoing resources, which were justified by the massive ROI.

8. Conclusion: From Firefighting to Future-Proofing — The New Quality-Ops Model

This case study demonstrates that a high error rate is not an inevitable cost of doing business in a complex digital environment. It is a symptom of a broken system. By implementing a structured framework built on centralized processes, dedicated expertise, and a relentless focus on root-cause analysis, Ad Operations teams can transform themselves from cost centers fraught with risk into scalable, efficient, and highly trusted engines of growth. The future of AdOps is not just faster; it is error-proof. This blueprint provides the roadmap to get there.

9. Appendix: Implementation Toolkit

- QA Checklist Template: A sample checklist for a programmatic campaign.

- RCA Template: A standardized form for documenting and analyzing errors.

- Knowledge Base Index: A suggested structure for a team knowledge repository.

- Staggered Shift Planning Guide: How to align shifts with campaign inflow data.

Case study: DSP trafficking team · Error rate reduced from 25% to <1% DOWNLOAD PDF VERSION